Understanding Virtual Experiences

Let’s take a look at the types of digital realities that currently exist, and how they differ from each other. Currently there are three major types of virtual experiences:

▪ Virtual Reality (VR)

▪ Augmented Reality (AR)

▪ Mixed Reality (MR)

Unfortunately, there is a lot of confusion about what these technologies really are and how they differ from one another. The best way to explain them is to define the three types of digital reality, introduce some of the use cases, and identify some of the major hardware and software players in the industry.

Virtual Reality (VR)

Unlike augmented reality (AR) or mixed reality (MR) which add virtual elements to physical reality, virtual reality completely replaces reality with a virtual world. Computing power and optics simulate a visual and auditory experience that seeks to fool the user into believing the virtual world is a real one.

Virtual reality (VR) is most commonly associated with immersive computer games in which an entire virtual world has been completely created, with scenery, buildings, tools, weapons, and players that have the ability to gain strength and powers to accomplish the game’s missions. Beyond gaming, there are several practical applications that are in the early stages of development.

VR Hardware

VR headsets fall into two categories: tethered and untethered. Tethered headsets have a cable linked to a computer, leveraging the processing power of a desktop CPU while the headset technology focuses on the display screen, sound, and spatial orientation. The three dominant industry players in VR hardware are:

▪ HTC Vive

▪ Oculus Rift

▪ Sony PlayStation VR

These three manufacturers, with price points in the $400 to $800 range, focus on creating a completely immersive experience with high-end graphics and sound, low-latency movement tracking systems (so that the virtual scenes change in response to any head/body movement with no time delay), and hand controllers that add to the realism of being “inside the game.”

In the untethered category, cheaper headsets ($50 to $150 range) leverage smartphones as their processors; Samsung Gear VR and Google Daydream View are two examples. Due to the order-of-magnitude lower price relative to the tethered versions, the functionality and precision of the untethered headsets is significantly reduced.

Augmented Reality (AR)

Though experts expect VR to enter the lives of the average consumer in the coming years, augmented reality (AR) is trailing a bit farther behind. AR enhances reality by layering information or virtual aspects over a direct view of actual reality. Much of what the user sees is still the real world, but additional information (such as navigation cues, or social media notifications) is overlaid. The AR experience centers around utility. The goal of the virtual notifications and overlaid information is to be useful to the user.

AR can leverage the smartphone’s ability to be a sensing/presentation device, so the barrier to using an application, from a hardware perspective, is much lower than for a tethered headset.

However, the limitations of integrating the real with the virtual arise from the limitations of the phone or tablet itself. Information captured from the real world is two-dimensional. While there is real-world object recognition and location determination, and a resulting graphic overlay that augments or replaces the real-world image, there is no true integration of the real and the virtual. The virtual content remains an information overlay.

The ability to pull out a smartphone, point it at an object such as a restaurant, a hotel or a street in a Residential area and be presented with relevant information will have real value.

Mixed Reality (MR)

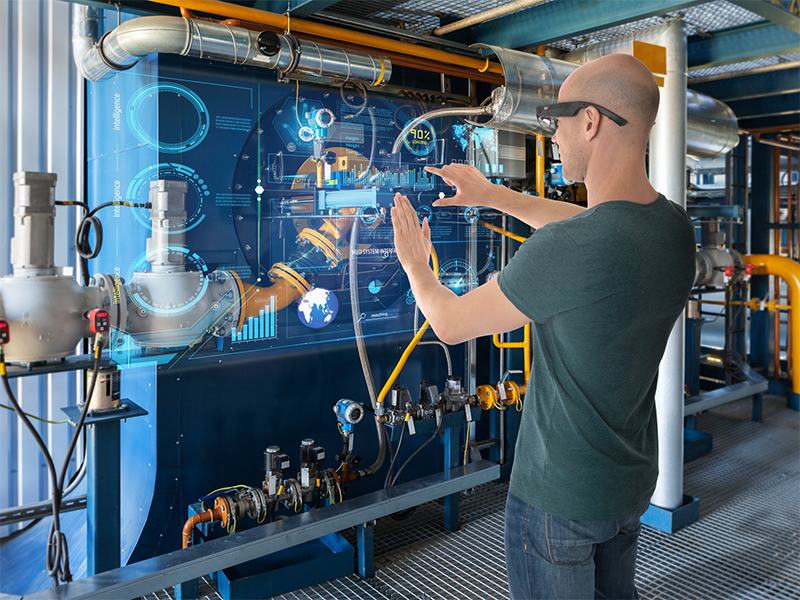

MR is often confused with AR since both involve a direct, live view of the real world. MR combines digitally-rendered, interactive objects with the real environment. But unlike AR, which involves layering objects and information on top of the existing world, MR incorporates believable, virtual objects with reality. These virtual objects can be treated just like their real-world counterparts.

To achieve this physical/digital visual integration, three-dimensional real-world sensing has to capture and process not only width and height, but also depth. This requires real-time triangulation, similar to eyesight, whereby two points of view provide stereoscopic information for object sizing and placement.

Hardware associated with mixed reality includes Microsoft’s HoloLens, which is set to be big in MR, although Microsoft have dodged the AR/MR debate by introducing yet another term: “holographic computing”. Microsoft has just announced a HoloLens emulator for developers so you can make applications for the new tech.

Of all the realities we’ve talked about in here, mixed reality seems like the furthest from fruition. However, it’s not impossible to imagine a future where synthetic content will be able to react to and even interact with the real world in some way.